Categoria: Digital Communication

-

La comunicazione dei comunicatori che creano la comunicazione

Un prodotto digitale è qualcosa che elabora e mostra informazioni. Un prodotto digitale è comunicazione sia che si tratti di un social media, sia nel caso di app di home-banking,…

-

Per diminuire le emissioni del trasporto non bastano le auto elettriche

Il documento “Car electrification and Urban Mobility” pubblicato dall’Associazione Internazionale del Trasporto Pubblico UITP rimette a focus il problema della decarbonizzazione e della mobilità sostenibile nelle città. Il Policy Brief…

-

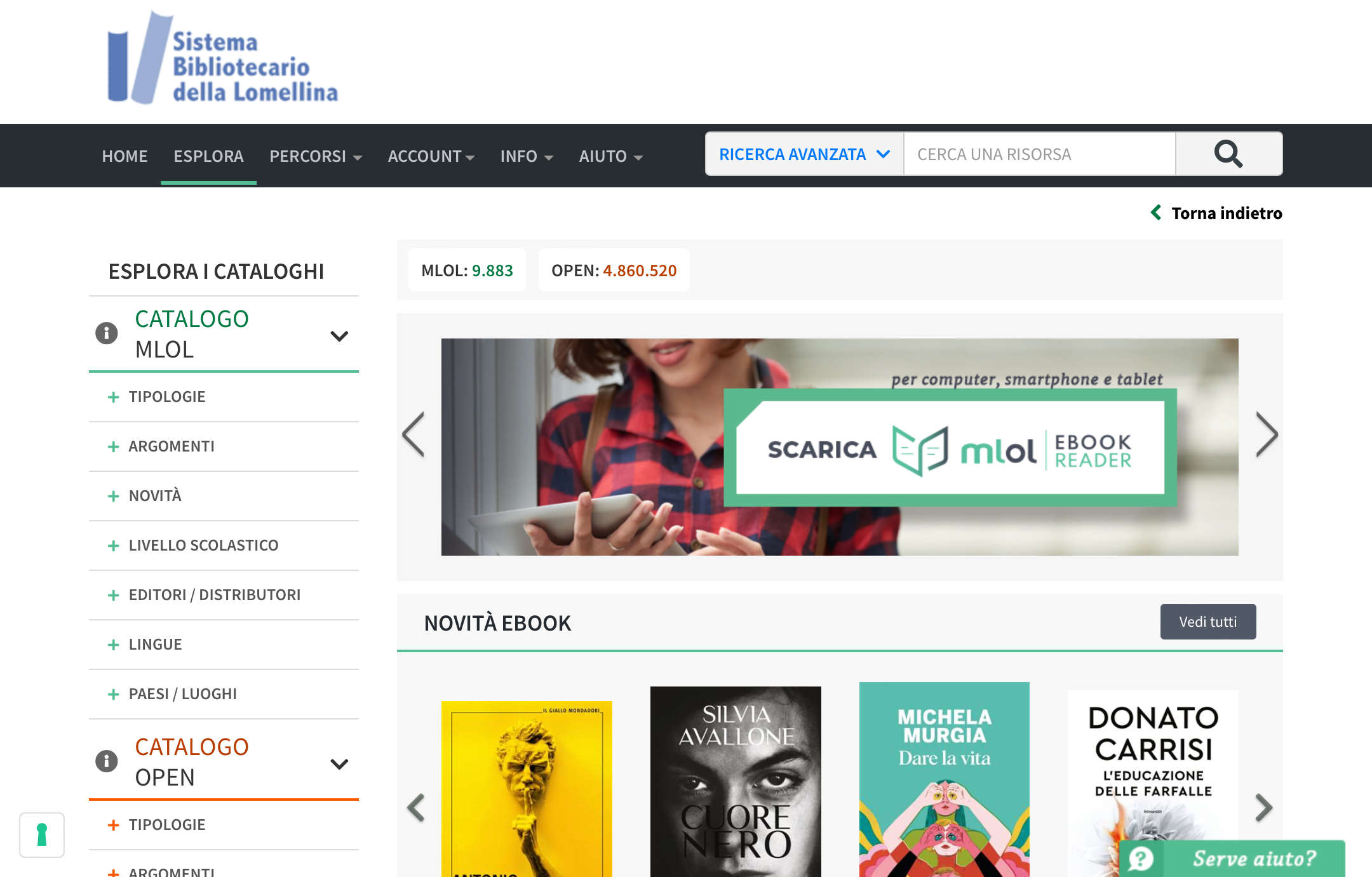

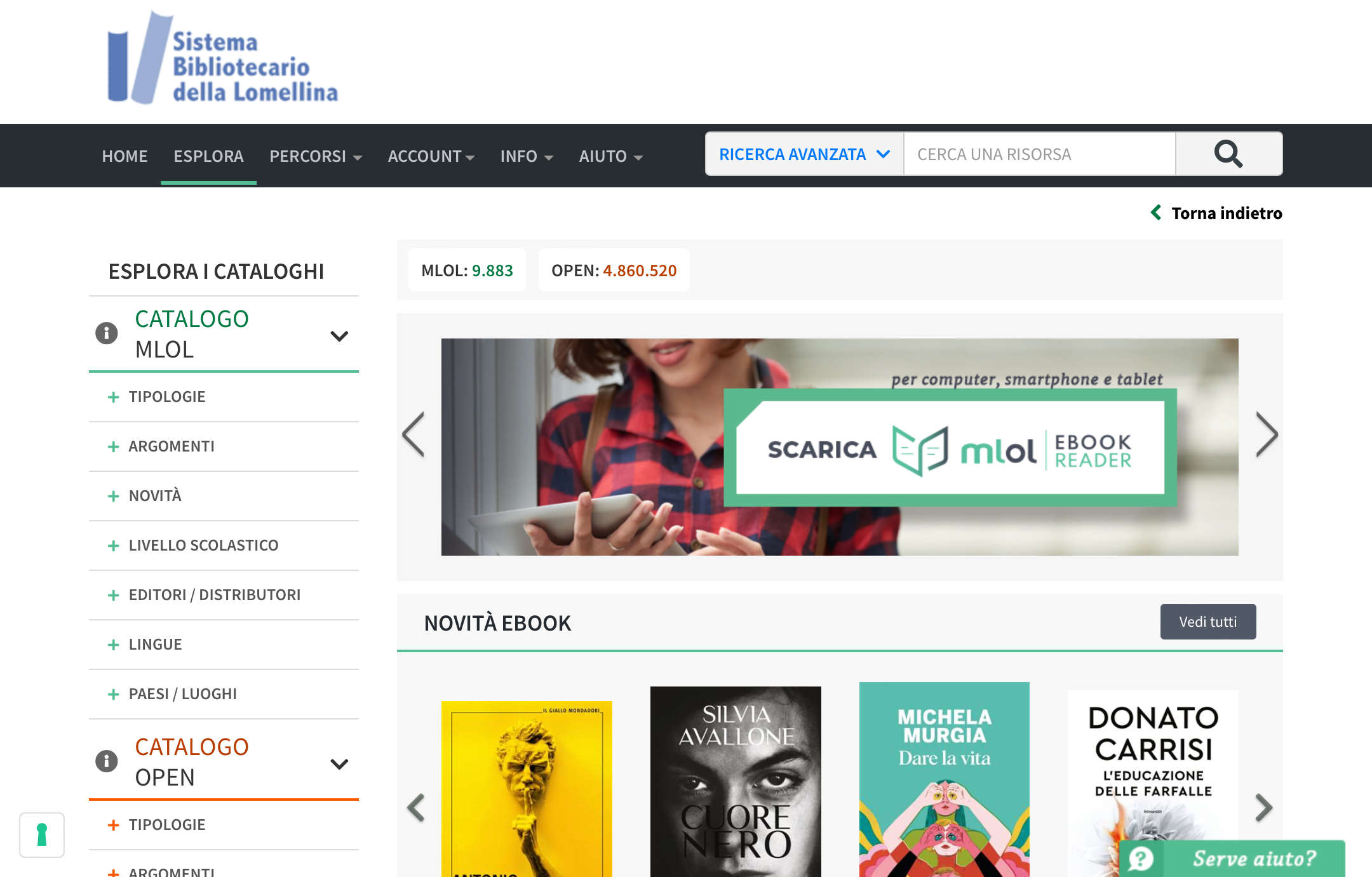

Leggere quotidiani, riviste e libri gratis su Medialibrary online

Medialibrary online è il servizio pubblico per consultare gratuitamente quotidiani, riviste, ebook e altre risorse open. L’ho scoperto per caso guardando la bacheca della biblioteca del mio paese ma una…

-

L’AI realizzerà davvero quello che promette?

Nella puntata “Investors Have Sky-High Hopes for AI. Can the Tech Deliver?” del podcast Big Take di Bloomberg, Max Chafkin sottolinea come solo Microsoft stia beneficiando economicamente dell’introduzione dell’AI mentre…

-

L’AI su Google Maps

Come ormai praticamente qualsiasi servizio digitale, anche Google Maps sta sperimentando l’AI. Sul suo blog Google scrive che l’app potrà dare suggerimenti più precisi grazie all’elaborazione dei dati presenti sulle…

-

I corsi dell’EIT, l’European Institute of Technology

L’EIT è l’European Institute of Technology (link al sito), l’iniziativa della UE per la formazione e il supporto dell’imprenditoria e della ricerca dell’Unione Europea nei settori Urban Mobility, Climate Change,…

-

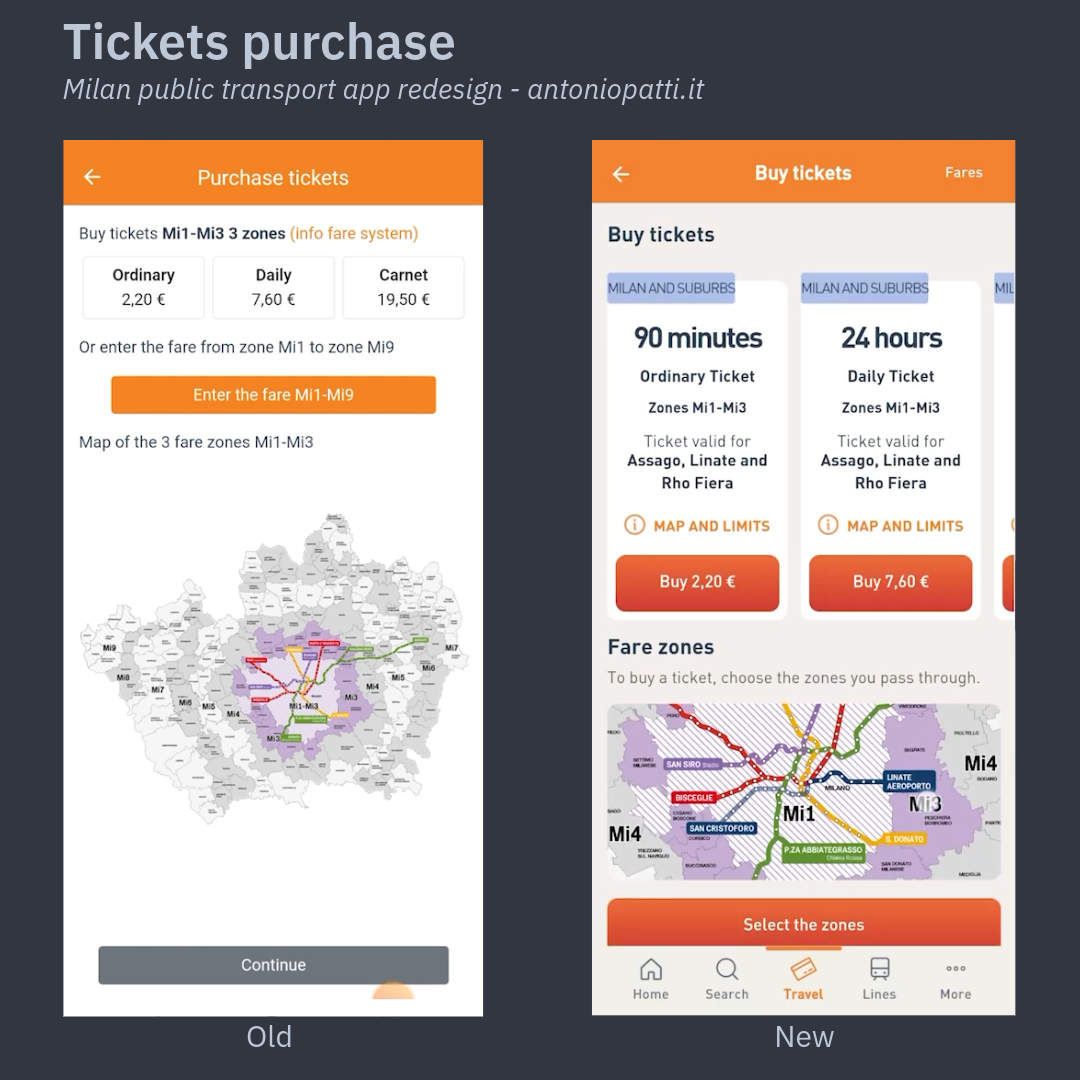

🇬🇧 Milan local public transport app redesign

I have been working at Azienda Trasporti Milanesi (Milan Public Transportation Company) since 2008 and over these years, I have been involved in almost all the B2C digital products. The…

-

🇮🇹 Il restyling dell’app del trasporto pubblico locale di Milano

Lavoro presso l’Azienda Trasporti Milanesi dal 2008 e in questi anni ho seguito quasi tutti i prodotti digitali B2C. L’app ATM Milano però è il progetto a cui sono più…

-

🇬🇧 Care, betray and abandon – the story of digital products

The life of a product manager and his product go through phases that I have symbolically defined as care, betrayal and abandonment. Care Imagining a product, drawing up documentation, talking…

-

🇮🇹 Cura, tradisci e abbandona – la storia dei prodotti digitali

La vita di un product manager e del suo prodotto attraversano delle fasi che simbolicamente ho definito della cura, del tradimento e dell’abbandono. La cura Immaginare un prodotto, stilare la…

-

🇬🇧 What is a digital product?

In the last 16 years I worked on many digital products from their inception to the final release and the following improvements. I’ve had a lot of experience both as…

-

🇮🇹 Cos’è un prodotto digitale?

Cosa è un prodotto digitale? Il primo dei post in cui spiegherò il mio lavoro da reposnsabile del prodotto digitale e da Digital Product Manager.

-

🇬🇧 Improving cars climate controls using vocal assistants

Usability of new cars digital screens is a safety and CX problem. On my “Cars digital experience analysis” article I already listed the main functions that should be always accessible…

-

🇬🇧 Cars digital experience analysis and some ideas

Digital cockpits and infotainment screens are becoming one of the most important components of modern cars interior. As automotive enthusiast and digital professional I’ve always looked at them as a…

-

🇬🇧 Improving passengers behaviour using nudge theory

In the article The Amazing Psychology of Japanese Train Stations the author writes about the nudge theories strategies adopted by the West Japan Railways for improving good passengers behaviours and…

-

🇮🇹 Migliorare i comportamenti dei passeggeri del trasporto pubblico con la nudge theory

Nell’interessante articolo The Amazing Psychology of Japanese Train Stations si parla delle tecniche adottate dalla West Japan Railways per limitare i comportamenti scorretti dei passeggeri e per diminuire i suicidi…

-

La comunicazione dei comunicatori che creano la comunicazione

Un prodotto digitale è qualcosa che elabora e mostra informazioni. Un prodotto digitale è comunicazione sia che si tratti di un social media, sia nel caso di app di home-banking, trasporto pubblico o domotica. Un prodotto che comunica bene è un prodotto che viene creato grazie alla comunicazione intensa tra decine o addirittura centinaia di…

-

Per diminuire le emissioni del trasporto non bastano le auto elettriche

Il documento “Car electrification and Urban Mobility” pubblicato dall’Associazione Internazionale del Trasporto Pubblico UITP rimette a focus il problema della decarbonizzazione e della mobilità sostenibile nelle città. Il Policy Brief dice che la transizione ecologica non può ridursi al passaggio ai motori elettrici dei veicoli privati perché le auto non creano un sistema di trasporto…

-

Leggere quotidiani, riviste e libri gratis su Medialibrary online

Medialibrary online è il servizio pubblico per consultare gratuitamente quotidiani, riviste, ebook e altre risorse open. L’ho scoperto per caso guardando la bacheca della biblioteca del mio paese ma una volta chiesto l’accesso, ho trovato un servizio che merita di essere conosciuto da più persone possibile. MLOL è una piattaforma che permette agli iscritti di…